Virtual reality reveals the interiors of animal bodies

Article author :

By means of an immersive virtual reality headset, students at the University of Mons are now discovering animals’ internal systems thanks to mind-blowing 3D reconstructions.

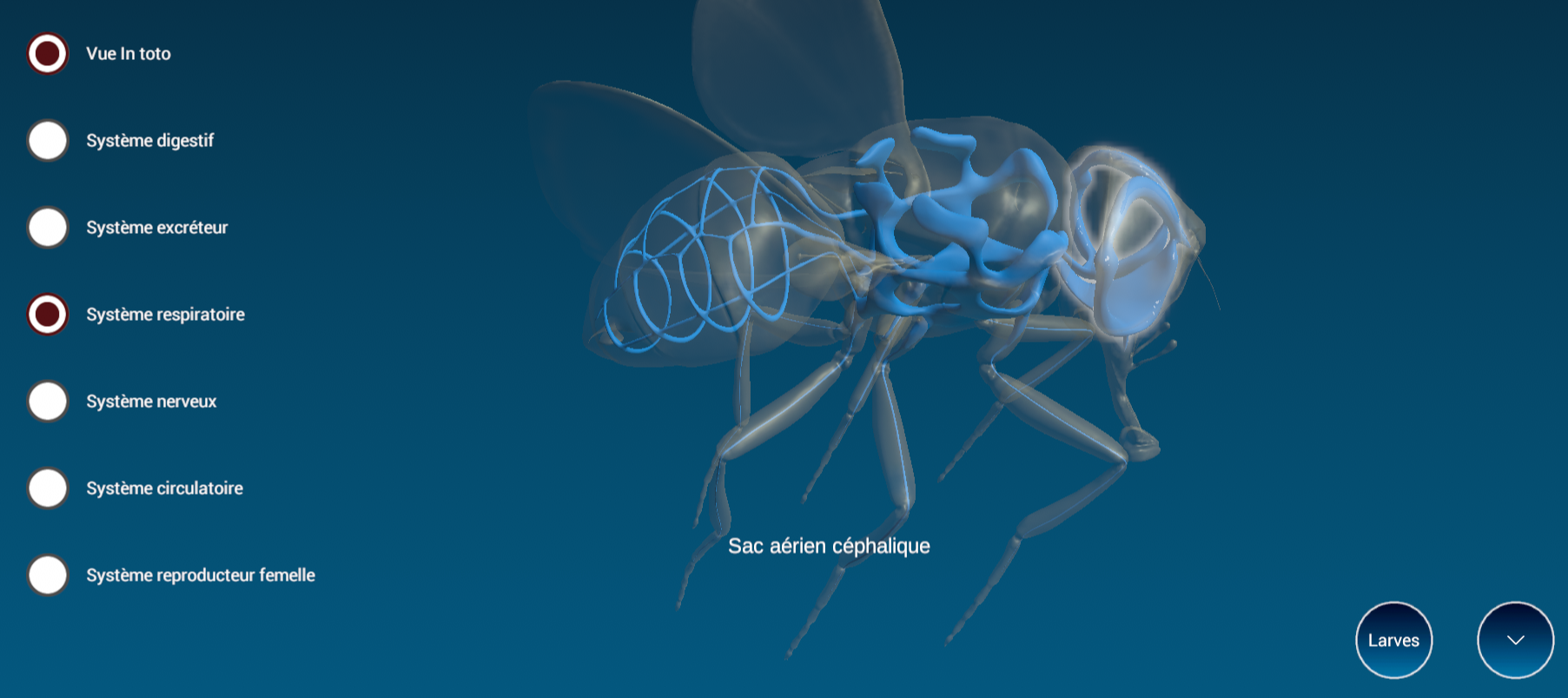

Nose in the air, hands at work, a skilful ballet in thin air. Animal biology students at UMons, virtual reality glasses screwed to their noses, dive into the corporeal mysteries of the fly. On the screen they can see the big picture. Thanks to the movements of their hands, the avatar is turned in every direction so that its slightest detail can be scrutinised. How does it breathe? The fly has no lungs, but instead a network of trachea which traverses its body. By selecting ‘respiratory system’, its representation in 3D is displayed on the screen, accompanied by annotations distinguishing the tracheal segments. ‘Virtual reality gives us a better view of the arrangement of certain organs in relation to others; it’s a lot more vivid than in 2D!’ exclaims one of its users. ‘You can keep turning the avatar in every direction, it’s wonderful as a means of understanding,’ adds a classmate.

This innovation, named MetaMorphos VR, is the fruit of work undertaken by Nathan Puozzo, a programmer, at the instigation of Igor Eeckhout, Director at the UMons Biology of Marine Organisms and Biomimetics Laboratory. It is intended to be used by zoologists, students, naturalists, and anyone who wants to understand animal architecture. And it is available on request from the Laboratory, as simple as that.

‘The MetaMorphos application is a real innovation which is having a positive impact on the teaching of zoology by illustrating certain theoretical concepts introduced in the courses. The modelling thereby allows students to better visualise the organisation of the internal organs of various animals and shows precisely how each system (digestive, respiratory, excretory, etc.) is positioned in their bodies,’ summarises Guillaume Caulier, a lecturer in the Bachelor’s in Biology. MetaMorphos is a serious alternative to the dissecting of bodies and thus opens the way to more ethical practices in the teaching of biology, even if it does not yet allow us to completely do away with dissecting, which remains an essential practical skill in the learning processes of biologists.’

From adjustable avatars to coloured systems

Spider, jellyfish, anemone, snail. For the moment, 15 metazoan animals (multicellular, in other words) of a small size can be viewed in the application. And the team has no intention of resting on its laurels, as there is more to come. ‘Our goal is to model two new animals each year,’ specifies Nathan Puozzo.

‘The application allows the external morphology of the avatars to be analysed, as well as their systemic organisation. The organ systems visualised are the digestive (yellow), excretory (green), nervous (white), reproductive (mauve), circulatory (red) and respiratory (blue) systems,’ points out Professor Igor Eeckhout, the project’s founder. The colour code used for each system is identical for all of the animals modelled.

An organ system can be visualised alone or with other systems, either in toto (the body of the animal is thus translucent) or without the representation of the translucent body of the animal.

Spatial manipulations

The avatar can be handled and positioned in all the dimensions of space and a ‘zoom’ function allows the part of the body being analysed to be magnified. ‘To see underneath the animal, you have to manipulate the two virtual reality controllers or both hands at the same time. When you pinch the forefinger and the thumb, it activates the control knob. If you make this movement with your two hands at the same time, that makes the virtual animal rotate,’ explains the application’s developer.

‘Each system is also manipulable individually. In addition, there is a spatial demarcation of each of the system’s constituent components and an annotation. For example, for the fly avatar, the stomach is easily distinguished from the intestines.’

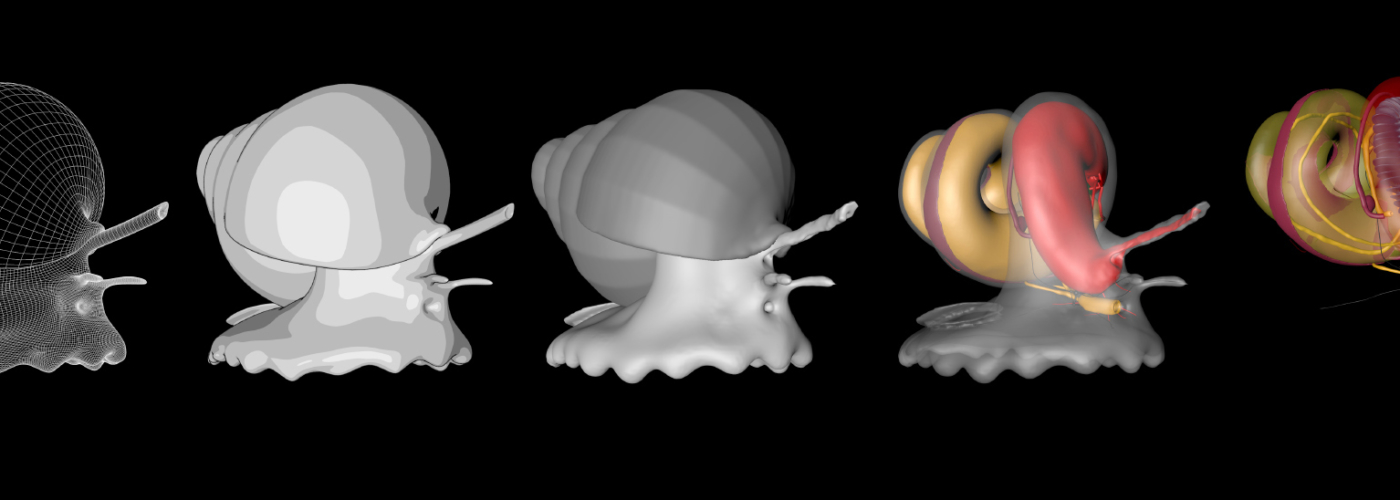

A multiplayer version already in the pipeline

This application is a long-term endeavour. It was, first of all, necessary to model the animals one by one, whilst respecting the position of the organs and representing them to scale. ‘That is carried out by ping-pong collaboration: on the basis of drawings, dissections, videos, diagrams on the internet, I produce a first draft in 3D of the animal avatar’s outer shell. Then the teachers make comments so that I can improve it. We advance step by step. Then we move onto the internal systems. This back-and-forth work depends on the complexity of the animal to be modelled, but takes about a month for each avatar,’ explains Nathan Puozzo. Initially, that led to an application which could be used exclusively on a PC, and available at https://www.bio-mar.com/metamorphos.

The developer then worked on converting the application into virtual reality. ‘As of now, in parallel with modelling new animals, we are thinking of a new iteration of MetaMorphos. At the moment, each user can manipulate the animals in 3D, but is isolated from the user sitting next to them. We are working on a unified view of the picture: teachers will thus be able to show a particular aspect of a particular system and all the headset users will see it at the same time. This development should enhance the pedagogical value. The first version is already ready, and the first round of feedback will get to us in September,’ he continues.

Growing pedagogical interest

In recent weeks, the MetaMorphos application has been presented at various fairs and conferences. And it could be said that it is winning over its audience. It has thereby been awarded the ‘Coup de Cœur’ prize at the Mind & Market competition, in addition to receiving an accolade for pedagogical innovation for academic purposes at the 2023 Teachers Day.

“L’intérêt va grandissant. D’autres professeur·euses de l’université, notamment en cristallographie, m’ont contacté, car iels aimeraient utiliser la réalité virtuelle pour leurs cours, adapter le concept à leur domaine.”

‘There is growing interest. Other lecturers at the university, especially in crystallography, have contacted me, because they would like to use virtual reality in their courses, to adapt the concept to their area of expertise.’

If the higher education students appear utterly convinced, the same is true for the very youngest pupils. ‘A primary education teacher intern from the pedagogy research department took the tool into her first-year primary class. She first of all asked the pupils to draw a fly: their drawings resembled something like 2D potatoes. She then got them to wear virtual reality glasses, view the outer shell of the fly in 3D and manipulate the avatar. The result? Once they had removed their virtual reality glasses, the children’s drawings of flies were much more precise and more in 3D in comparison with their first sketches,’ a delighted Nathan Puozzo informs us. The chances are that the concept he has developed will be a must-have in classrooms in the years to come.

A story, projects or an idea to share?

Suggest your content on kingkong.